Last year, we reached a milestone at Cherry Hill when we moved all of our projects into a managed deployment system. We have talked about?Jenkins,?one of the tools that we use to manage our workflow and there has been continued interest on what our "recipe" consists of. Being that we are using open source tools,?and we think of ourselves as?part of the (larger than Drupal) open source community, I want to share a bit more of what we use and how it is stitched together. Our hope is that this helps to spark a larger discussion of the tools others are using, so we can all learn from each other.

Git is a distributed code revision control system. While we could use any revision control system such as CVS, Subversion (and even though this is a given with most agencies, we strongly suggest you use *some* system over nothing at all), git is fairly easy to use, has great documentation, and is already widely used within the Drupal community which makes it easy for us to pull in contract developers and designers for larger projects.

Drush (or 'Drupal shell') is basically a way to interact with a Drupal application from the command line (or terminal) written in PHP. Since we as an agency primarily work with Drupal and all of us are very familiar with the Linux command line, we are big fans of Drush. Its easy to install so we have it installed on all our servers and in our personal development environments. We specifically take advantage of the drush aliases functionality which lets us go to a specific site environment and run commands locally or from our build server.

Capistrano is a remote server automation and deployment tool written in Ruby. We use Capistrano to manage our various drupal projects on our servers so we have a way to deploy a new version of the codebase, create snapshots, rollback our codebase (and database) to a previous snapshot if we find out the code was not supposed to be deployed, and various other workflows (such as syncing the database/files from our production website to our development website). This is accomplished by integrating Capistrano and drush together so that we have a suite of drush tasks available to run on our servers. Our repo of this is available on github. Note that we are using Capistrano version 2.x rather than version 3.x. There are a number of changes to Capistrano in version 3 and our integration would need to be heavily revised as a result. This will come in time. We have been asked numerous times about why we chose to use Capistrano as the driving tool to manage our deployments. The simple answer is: Capistrano does a lot. In fact, it may be too much for other projects. But in our case, we want all the core sets of features it opts to run. Additionally, it is well documented and scales out well for the kinds of projects we do.

Saucelabs is a hosted selenium testing which lets us perform browser based testing of our various projects (both automated and manually) in different browsers. This has turned out to actually be a huge timesaver at Cherry Hill. Since we have a number of clients on older versions of windows (and consequently, older versions of IE) and our team is primarily Mac/Ubuntu-based, this allows us to test on various browsers without installing several fairly large?VMs on our system. Additionally, we can also share a session with other team members which helps a lot with debugging. Finally, since this service is built around Selenium, we have tied it into a PHP testing framework called Behat to automate visiting a site and running tests to help ensure various user actions in our web application work as expected.

Jenkins is an open source continuous integration tool written in Java. It uses a concept of builds to run tests, shell scripts, and various other commands and provides an easy way to see whether the build of a particular job has failed or has run successfully. In our case, we have intermixed the other four technologies mentioned above into this workflow for continuous deployment/integration:

- Person commits code to git (repeated until ready to push).

- Person pushes code to hosted git repo (we use unfuddle; you can use github, bitbucket, the slew of other hosted git solutions or roll your own with something like Gitlab).

- Code repository notifies Jenkins that git repo has been updated.

- Jenkins automatically initiates 2 jobs in tandem.

- Capistrano script starts running to deploy new code to dev server.

- Create DB backup via drush.

- Clear cache via drush.

- Update codebase.

- Run any updates via drush.

- Symlink new codebase as 'current'.

- Script returns back pass/fail based on how the deploy goes.

- Jenkins initiates a behat test run on the new code.

- Behat script starts running.

- Saucelabs initiates new selenium session with browser and runs through user actions (visit page, search for content, add content, etc).

- Behat returns back XML results which Jenkins parses as pass/fail.

- Capistrano script starts running to deploy new code to dev server.

Our dev environment builds are initiated automatically, whereas our production environment builds are initiated manually. We have the production environment as a manual intervention ? which requires just pushing a button ? to ensure that a concious decision was made to move the code to production. Just because code has been committed, it does not mean we actually want the code to be live for everyone to see just yet!

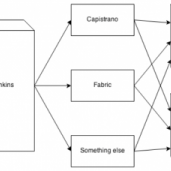

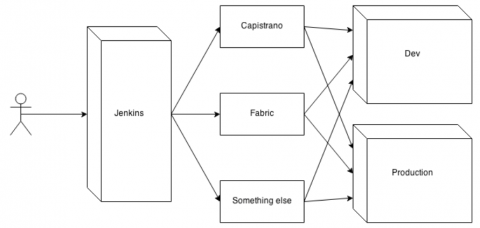

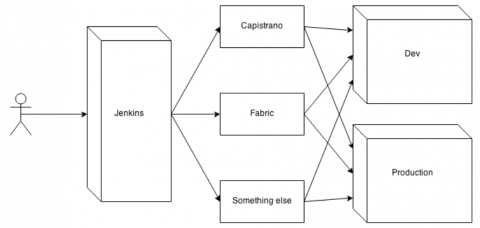

We have been asked many times on why we use the set of tools we do and the answer to that is we are using tools that we feel there is strong community behind and where we can ask questions and have a better chance of answers. Moreover, the most important we have in our system is actually Jenkins. See the image below:

From our image, we can see that the user primarily interacts with Jenkins. This comes either in the form of pushing code commits, visiting the website, or receiving notifications on passing/failing builds. Jenkins initiates the builds that will be:

- tests, which can come via Behat, Codeception, RSpec, etc.

- deployments, which can come via Capistrano, Fabric, or whatever secret sauce you decide you want to use

- or any other tasks such as syncing the DB from a production site down to the dev environment.

This becomes very powerful because it means that we can decide to change our deployment workflow in the future if we want and the interactions for the user would remain the same (for example, we are considering using docker to spin up test environments based on feature branches. While our entire backend infrastructure and code might change, the user is still going to see the same sets of options).

Finally, a post with this many tools isn't worthwhile if we do not talk about what our benefits from setting up all this work has been:

- Fewer ssh accounts on our servers. When we pulled in contractors to help us out with projects, they would need to get in contact with someone at Cherry Hill when they wanted to get their changes deployed to our dev environment or create an ssh account for the contractor (who may have varying levels of skill at the command line). Interfacing with Jenkins allows us to have accounts for sysadmins and other select staff.

- Fewer interruptions. Having ssh accounts for contractors is not the best idea so we would let them contact us. Having your own work interrupted by a message to push code to an environment or to run tests manually means:

- Taking care of the immediate needs of the current request. This bypasses any priority you may have in the work you are currently doing.

- Readjusting back to the issues you were working on before the request. Studies have shown it can take as much as 30 minutes to get readjusted.

- Catching errors before the client. We are trying to incorporate more and more automated testing of our web applications (which are run by Jenkins) so we can catch and resolve errors before deploying the changes to production and having a client (and their constituents) see the errors. While we are not going to catch all the issues, we can certainly aim to catch as many as possible. This can be a huge win over manual testing - we have worked with clients that will thoroughly test their site manually before a code deployment and that process can take hours. Seeing the hours turn into a programmable test suite that takes less than 15 minutes is a huge timesaver.

- Happier team. My coworkers and contractors can feel confident in deploying their changes to an environment. The steps to deploy are automatically carried out without a need for them to remember commands and they can work on solving client problems instead of working on solving server issues. Managers can carry out a deployment to production with the push of a button. And our devops team can work on making our infrastructure even better.

If you wish to see the slides of our presentation, we have uploaded them to slideshare.